- Published:

- 16 April 2026

- Author:

- Gillian Matthews and Darren Treanor

- Read time:

- 8 Mins

The National Pathology Imaging Co-operative has launched a public register of AI products to monitor available AI tools and make their clinical evidence easier to locate. Gillian Matthews and Darren Treanor highlight their research in the field.

As the transition to digital pathology gains momentum across NHS labs, attention is turning to AI software, which might supplement digitised workflows. There is increasing recognition of the potential value of AI in pathology: from improving diagnostic precision and reducing turnaround times, to personalising treatments and predicting disease recurrence. Maintaining transparency in this quickly moving field is essential. To support this, the National Pathology Imaging Co-operative (NPIC) have launched a public register of AI products, to monitor available AI tools and make their clinical evidence easier to locate. In this article, we highlight some key findings from analysing the AI products on the marketplace, including details of how they were tested, and explain how this informs areas of focus for future AI evaluation.

AI poses both an extraordinary opportunity and a unique set of challenges for digitised pathology departments. There are numerous tasks where AI-based image analysis software has the potential to assist, e.g. highlighting tumour regions, performing cancer grading, estimating biomarker expression, and predicting patient response to treatment. These capabilities enable AI to support the pathology workflow in several ways, including triaging cases, accelerating review of slides, pre-ordering immunohistochemistry or additional tests, or offering a second read of the image to increase safety. As a result, AI heralds a promise of improving diagnostic precision, reducing turnaround times, lowering costs and personalising treatments.

However, achieving these lofty aspirations relies on AI tools showing reliable and consistent performance, while ensuring that users are aware of their limitations. Developing AI tools often involves exposing AI models to thousands of pathology images during training, which allows the model to then ‘recognise’ key features when presented with new, unseen images. But how well AI models perform on these unseen images is dependent on what type of data they were exposed to, and how well that data captures real-world diversity. This is of particular importance in digital pathology, given the image variation that can result between labs, for example due to different staining procedures, sample preparation techniques, and/or scanning equipment. This variation can trip up AI models if they have not previously seen similar data. Therefore, when selecting AI models to deploy clinically in the NHS, understanding how these tools have been developed and tested is paramount.

NPIC is a national digital pathology programme, based at Leeds Teaching Hospitals NHS Trust, which is deploying digital pathology across regional and national NHS pathology networks. A core component of NPIC’s mission is to build a platform to support the development and evaluation of AI and generate independent evidence of AI performance on NHS data. As digitised Trusts begin to consider AI solutions to adopt into their workflow, NPIC have surveyed the AI marketplace to take stock of the current offering of AI tools for pathology. Our initial searches in 2023 identified 26 AI products for H&E image analysis with EU/UK regulatory approval,1 and we have since expanded this in 2025 to now include 90 products for H&E, IHC, and other stains.2

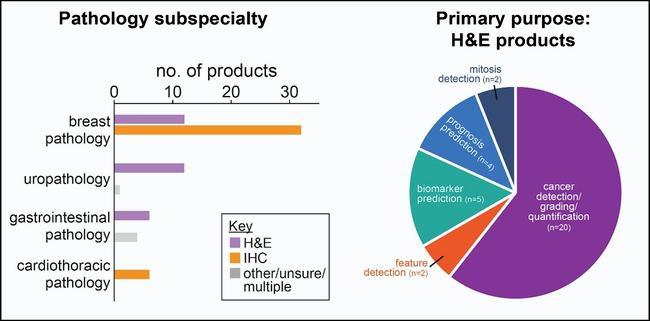

We analysed the key features of these products, which showed that, both in 2023 and 2025, the main clinical specialties represented among products for H&E image analysis were breast pathology (12 products) and prostate pathology (12 products), followed by gastrointestinal pathology (6 products). In terms of their primary function, most H&E products performed a combination of cancer detection, grading, and quantification tasks (20/33 products), but there were also AI tools available for estimating the expression of biomarkers, such as HER2, (5 products) and predicting prognosis or treatment response for cancer patients (4 products). In addition, the vast majority of CE-marked products for IHC image analysis were intended for use in breast pathology. Thus, while there are currently AI tools available for a selection of potential use cases and clinical specialties, there is room for the field to grow. As the scope of AI products expands, ongoing monitoring will be important to ensure the tools in clinical use perform to a consistently high standard, and their functionality matches the needs of pathologists and patients.

Digging into data used for AI testing

With mounting enthusiasm for deploying AI tools, there is a growing need for high-quality, robust evaluation. This has been touted as a national priority for the NHS, to ensure safety and build confidence in the use of AI tools.3 One of the most consistent recommendations is to test AI models on diverse datasets that reflect real-world variation. This helps to ensure they will perform well in a new lab setting.

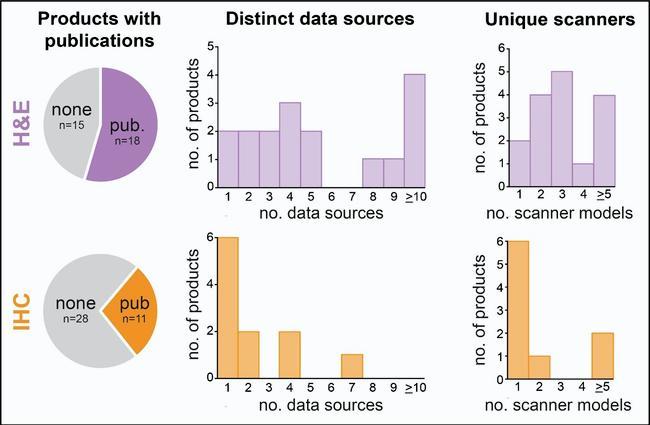

To understand more about the composition of datasets used to test AI products for digital pathology, we examined all available publications on AI products with regulatory approval. We found that only about half the hematoxylin and eosin (H&E) products had publications describing their development and testing, with no publicly accessible data for the remainder. Analysing the 41 available studies describing external testing, on 17/33 H&E products, we found that 6 products reported testing on 1–3 distinct sources of data, and 1 or 2 scanner platforms. For IHC products, only about a quarter of products had available publications, and for 6 of these 11 products, external testing had only been reported on 1 data source and 1 scanner platform. While some products had reported broader testing, the limited and variable dataset diversity in many cases is an area for improvement that future AI evaluation can focus on to help demonstrate robust, generalisable AI performance across data from different labs.

A further finding from our analysis was that only 4/33 H&E products and 2/39 IHC products reported testing on data from the UK. Limited testing on UK data is recognised by the National Institute for Health and Care Excellence (NICE) as a common limitation of AI products for image analysis, not only in pathology but across other fields of medical imaging. Thus, while AI holds great promise, there are shortcomings in evidence generation to be addressed as the field moves forward.

Supporting transparency with public AI registers

Harnessing the power of AI to improve NHS services is at the heart of the government strategy for NHS reform. However, as the use of AI in clinical practice gains traction, transparency is a necessity. Information on AI tools for clinical use needs to be readily available for all those with the potential to be impacted – from pathologists and lab managers to NHS commissioners and patients. This notion is supported by an NHS public survey on AI, which highlighted that the public want easy access to information about product compliance with medical device regulation to improve confidence and trust.4

Public registries have been offered as a solution to foster transparency, with the Regulatory Horizons Council recommending that the Medicines and Healthcare products Regulatory Agency (MHRA) construct a public-facing register of AI medical devices on the UK market, with details of their key features, intended use, and risk class.5 Taking heed of this advice, the Royal College of Radiologists launched a registry of regulatory-approved AI applications currently clinically deployed in the UK. To similarly uphold transparency in digital pathology, NPIC have compiled the information gathered in surveying the AI marketplace into an online register of AI tools. This open register contains public information on AI tools for image analysis, including their primary function, tissue focus, and published clinical validation studies. For those exploring the AI marketplace in digital pathology, this provides a first-pass resource: allowing potential tools to be easily located and offering further details on how they have been developed and tested. NPIC intend to expand and update the register to provide an ongoing independent source of data as deployment of AI begins to forge ahead at NHS Trusts.

Enabling robust AI evaluation in the NHS

Confidence and trust in AI-based software is integral to its success and will only be possible with transparency, rigorous testing, and ongoing demonstration of added value. To meet the government’s commitment to deliver “faster and at-scale real-world evaluations of AI” and to “roll out validated AI diagnostic tools” in 2027, NHS pathology departments need to be supported in AI evaluation activities used to inform the selection of products for clinical use.6 With the spread of digital pathology networks and Secure Data Environment (SDE) initiatives to facilitate data access, the NHS is in a prime position to lead the charge on robust evaluation of AI for digital pathology. As novel tools continue to emerge, monitoring the AI marketplace and making product information accessible to all will establish a culture of transparency and set the scene for ethical deployment of AI.

References available on our website.